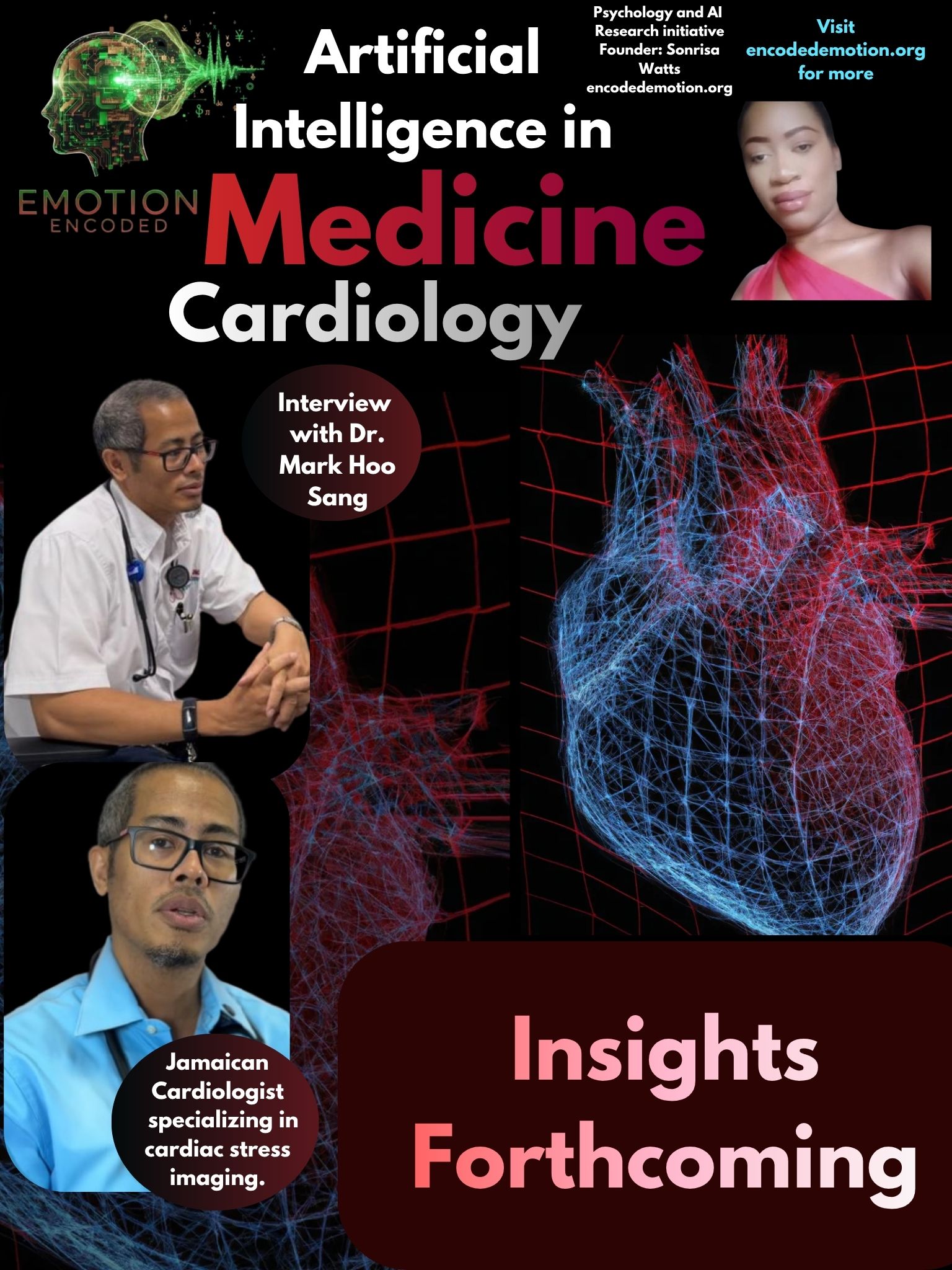

Emotion Encoded: Artificial Intelligence in Cardiology

Dr. Mark Hoo Sang

Dr. Hoo Sang’s insights highlight the tension between using AI for speed and keeping a sharp clinical edge. He presents as a pragmatic expert who treats algorithms as high-velocity tools rather than absolute authorities. His perspective suggests that while AI can handle routine triage, it creates a psychological well that can lead to a dependency on automated suggestions over time.

Question: If an AI recommends a treatment you believe is harmful, even if the data says it’s statistically best, what are you going with?

"If an AI recommends a treatment I believe to be harmful i would have to do further research in regards to the actual original articles. My decision is based on where the body of evidence leads."

This approach shows a high level of resistance to automation bias. Instead of simply picking between his gut and the machine, he resets the entire process by returning to primary research. For a specialist at this level, a statistical "best" is secondary to the actual evidence, proving that the doctor's role is to act as the final filter to ensure the math aligns with the human reality.

Question: Do you worry AI might teach future doctors to stop thinking for themselves?

"I have great concerns that AI will prevent doctors from thinking for themselves as I have seen my personal use go up."

This is a very honest take on the de-skilling trap. If a seasoned cardiologist is noticing his own reliance on AI increasing, the risk for junior doctors is even higher. They may never build the foundational mental muscle memory needed to spot errors if they let software do the heavy lifting from the start of their careers.

Question: Would you prefer an AI that shows how it predicts a heart attack for each patient, or AI that only gives a risk score without explanation?

"The answer to this question would depend on the case scenario and how much time i have. If i have the time to cerebrate more I would like an explanation. In most instances the score is all I'd need."

This highlights the reality of a high-volume clinic versus a research lab. When things are moving fast, a simple risk score is enough for triage. However, for complex or high-stakes cases, he wants to see the reasoning so he can think through the problem properly. It is a dual-mode approach: using AI for speed when possible, but demanding transparency when the situation gets difficult.

Sonrisa Watts // Emotion Encoded // 2026