Burnout, Bias, and the Black Box

In the high-volume world of radiology, where thousands of images are scanned daily and a single missed pixel can change a life, there is significant pressure on human visual acuity. I spoke with Dr. Nalini Kokaram to explore how clinical intuition mixes with automated detection.

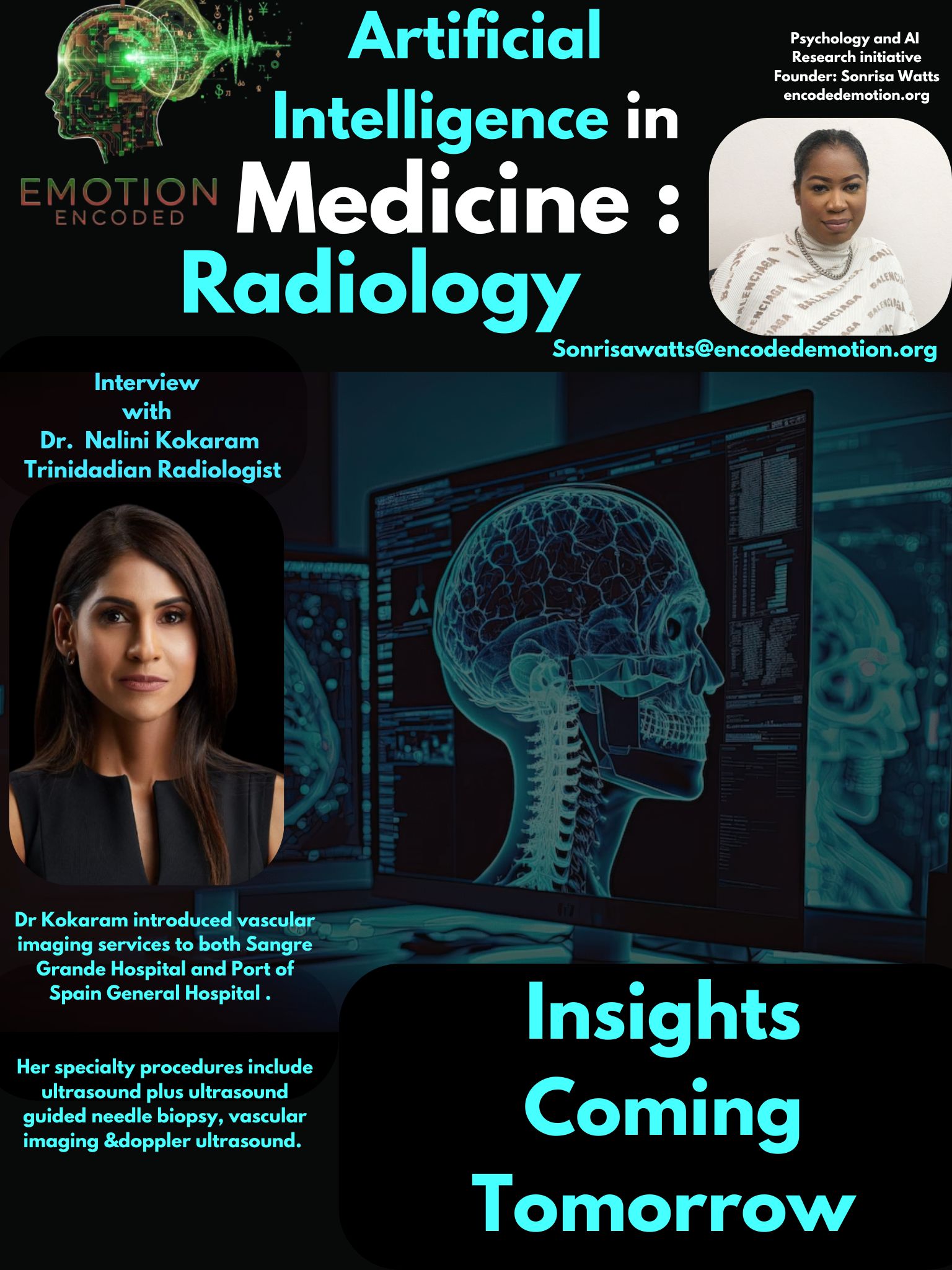

Dr. Nalini Kokaram, MBBS, FRCR

A Fellow of the Royal College of Radiologists (UK) and graduate of UWI with over two decades of clinical experience. She has served as Head of Radiology at Sangre Grande Hospital and Port of Spain General Hospital. A pioneer who introduced vascular imaging to the public health sector in Trinidad and Tobago.

The Myth of Shared Blame

Question: Do you think other radiologists may use AI because it’s better at finding things, or because it’s a safety net to share blame if a mistake happens?

Dr. Kokaram's Response: "As Radiologists, our fundamental purpose is to diagnose the underlying condition and guide the referring clinician... So 'sharing blame if a mistake happens' is not a consideration. In a normal work day, it is understandable how a Radiologist may be prone to human errors as focus wanes. It is for this reason, I believe that AI is an asset... it acts as an extra pair of eyes for the Radiologist to ensure diagnostic accuracy."

Dr. Kokaram frames AI as a mechanical necessity to combat fatigue rather than a legal shield. In a sector where volume surpasses specialists, AI ensures that the "extra pair of eyes" never gets tired, even when the human ones do.

The Requirement of Explanation

Question: If an algorithm gives a diagnosis but can’t explain “why” or show the specific pixels, do you accept the finding or ignore the AI?

Dr. Kokaram's Response: "For every differential that is disregarded there must be a data driven reason. So if an algorithm provides a diagnosis without an explanation, then I think it should be ignored as ultimately we, as Radiologists, must base all our diagnoses with clear and convincing data driven evidence."

Trust in radiology is not built on the "what," but on the "how." If an AI cannot participate in the logical process of exclusion, it cannot be integrated into a final clinical report.

Gut Instinct vs. The 99%

Question: When a 99% accurate AI says a scan is Normal but your gut says it’s High Risk, do you find yourself looking for reasons to agree with the machine?

Dr. Kokaram's Response: "Calling something Normal because AI claims it is, even though your gut—your years of knowledge and experience—tells you otherwise, would be irresponsible. It is always better practice to seek more information before committing to a final diagnosis. This protocol will serve Radiologists well as we navigate a new world in AI."

Dr. Kokaram defines "gut instinct" not as a guess, but as the synthesis of years of high-level experience. While AI is a tireless partner, it cannot replace the human courage required to remain "safe" in the face of algorithmic certainty.

Sonrisa Watts // Emotion Encoded // 2026