Surgical Intuition vs. Algorithmic Authority in the Operating Room

When cutting-edge AI is dropped into high-stakes surgical environments, does it act as a safety net or a psychological trap? To find out, we interviewed one of the Caribbean’s leading surgical authorities to see how master clinicians view the rise of machine intelligence.

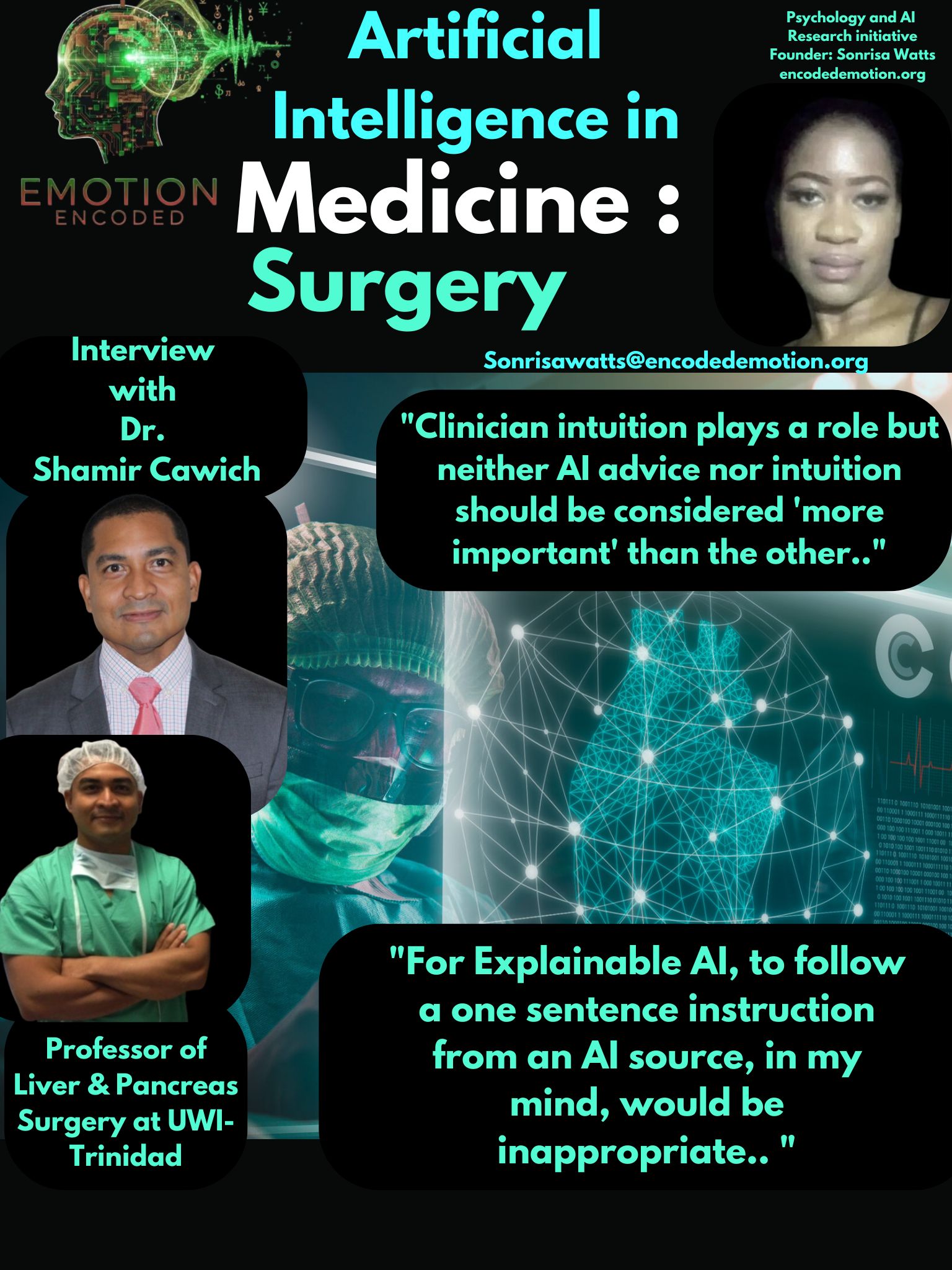

Dr. Shamir Cawich is a full Professor of Liver and Pancreas Surgery at the University of the West Indies. He is a Fellow of the American College of Surgeons, has authored hundreds of peer-reviewed research articles, and has pioneered advanced surgical proctorships and mentorships across the Caribbean. He holds several advanced fellowships, including from the Royal College of Surgeons and Imperial Medical in the UK, specializing in Minimally Invasive Surgery and Robotic Navigation.

Experience vs. Automation

Question: If the AI tells a surgeon to do one thing, but their 20 years of experience says to do another, do you think they feel pressured to trust the screen over their own eyes?

Dr. Cawich's Response: "While I understand the value of artificial intelligence, there are also limitations. Most significantly, it is an accumulation of knowledge / data acquired from multiple sources, many without rigorous scientific validation. Therefore, while I would consider the advice given by an AI source, I think it must also be validated by the clinician. in the end, it is the clinician who is responsible for patient outcomes and so his or her judgment should be the predominant source of decision, with suggestions but not instructions, but the AI source."

Surgeons are not looking for an automated pilot; they are looking for a co-pilot. Dr. Cawich highlights a massive gap in current AI development: the lack of accountability. An algorithm can process data, but it cannot stand in a courtroom or carry the moral weight of a patient's life. True integration means treating AI as a suggestion engine, not an instructor.

The Danger of "Smooth" Explanations

Question: In the operating room, would you prefer a super simple AI that just gives a one-sentence "why," or a complex AI that gives you deep data and statistics?

Dr. Cawich's Response: "While a one-sentence why is convenient, the clinician must be able to evaluate the data / evidence that is behind every clinical decision. To follow a one sentence instruction from an AI source, in my mind, would be inappropriate. Surgeons must always evaluate the data and perform all manoeuvres with purpose and reason - not solely by instruction. Therefore, deep data and statistics are required."

Tech developers assume busy experts want over-simplified, push-button interfaces. Dr. Cawich’s response proves the exact opposite. Oversimplification breeds distrust. In a crisis, a master surgeon doesn't want a smooth one-liner from a computer; they want the raw math so they can verify it. If AI hides its work, experts will reject it.

Intuition vs. Calculation

Question: Do you personally think it's always clinical intuition over any type of advice an AI can give in surgery?

Dr. Cawich's Response: "No. Clinician intuition plays a role, but neither AI advice nor intuition should be considered 'more important'. They are both valuable sources of information that contribute to any decision. The clinician's duty is to combine all sources of information / data and to make a calculated decision on which will be better in an individualised circumstance on the operating table."

This is the ultimate take on modern human-machine collaboration.

The master surgeon's ultimate duty is taking disparate data pointsdecision, clinical gut instinct and machine data, and making a localized, split-second human decision.

Sonrisa Watts // Emotion Encoded // 2026