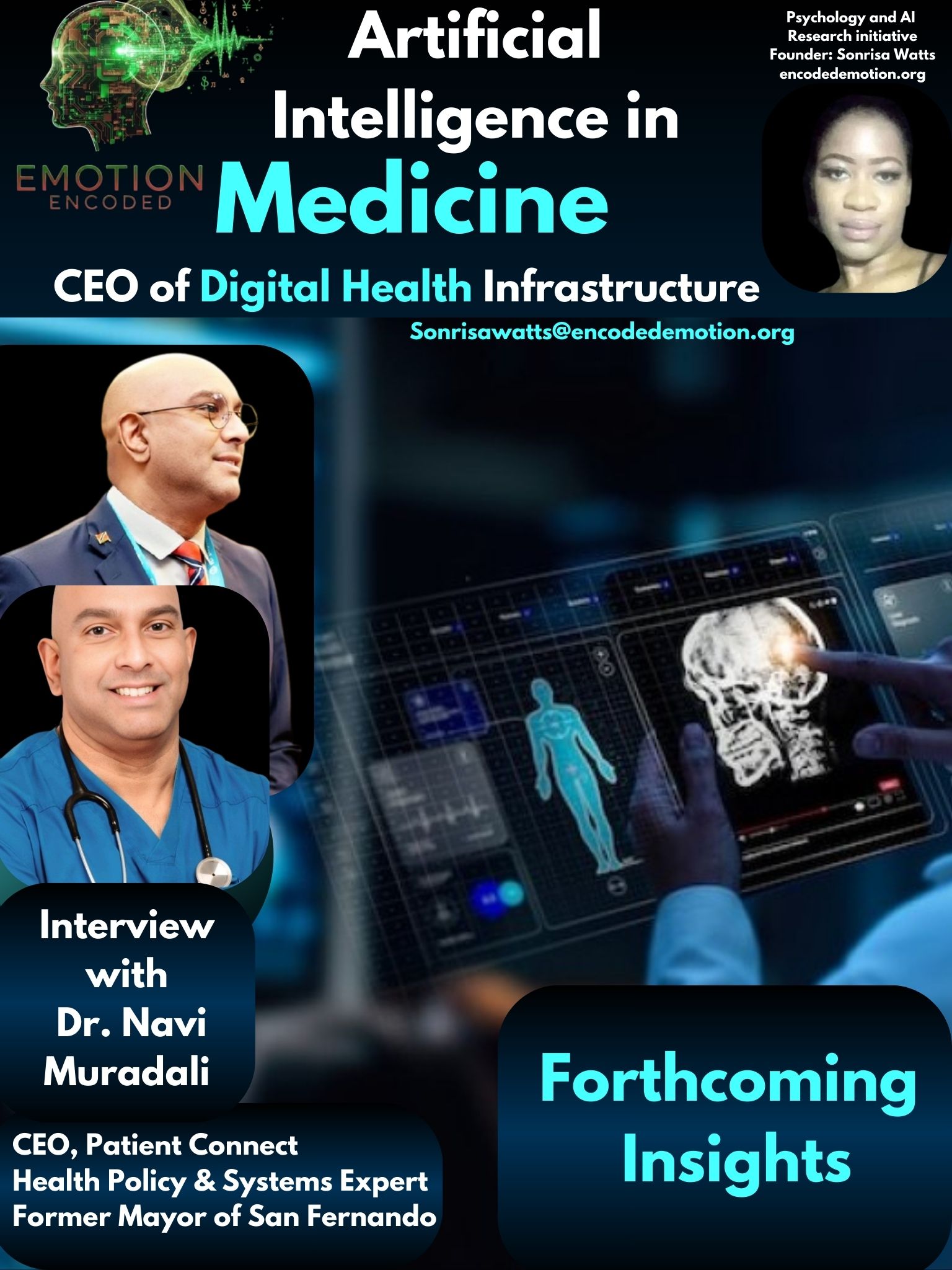

The "Convincing" Trap: Why Dr. Navi Muradali Thinks AI Needs a Human Co-Pilot

In the latest installment of the Emotion Encoded expert series, I spoke with Dr. Navi Muradali about the friction between clinical intuition and algorithmic advice. It focused on the reality of medical accountability and why "Explainable AI" might actually create new risks for seasoned professionals.

1. On Trust

I began by asking what truly drives the hesitation in the medical field: is it the data itself or something more personal?

"I don’t think it’s just about trust in the data; it’s really about responsibility. At the end of the day, the physician is the one accountable for the outcome, so there’s always going to be some hesitation in relying on a system you didn’t personally train or fully understand.

There’s also the human side of medicine. Over time, you develop a kind of clinical instinct—that “feel” for a patient that doesn’t always show up in data. In the AI tools we’re building for employee wellness in the Caribbean, we’re very clear that AI is there to support that instinct, not replace it. It can pick up patterns across sleep, activity, or metabolic risk that we might miss at scale, but the interpretation still has to come from the doctor."

2. Explainable AI

We then discussed the double-edged sword of "Explainable AI" and how a logical justification can sometimes mask a clinical error.

"That’s actually a real concern. Just because an explanation sounds convincing doesn’t mean it’s right; and in some cases, it might make it harder to question the result.

In medicine, we’re used to this. A scan or lab result can look very clear-cut, but we still step back and ask, “Does this actually fit the patient in front of me?” AI has to be treated the same way. In the systems we’re developing, we try not to present AI as giving a final answer, but rather showing trends and signals that the physician can interpret. That way, you’re less likely to blindly accept something that doesn’t make clinical sense."

3. On the Final Call

Finally, we looked at the future landscape of the Caribbean workforce and whether the physician remains the ultimate authority.

"I think the physician will still make the final call, but the way we get to that decision is definitely going to change. Medicine has always used tools; guidelines, scoring systems, imaging. AI is really just the next step in that evolution.

Where it becomes really powerful is in prevention. AI can continuously look at things like wearable data, body composition, lifestyle habits; things we don’t traditionally monitor closely. AI can also flag risks much earlier. In platforms like OMNI, which we’re developing for workplace health in the Caribbean, AI acts more like a co-pilot. It helps you see what’s coming, but the responsibility for the decision still sits with the physician."